Table of Contents

Due to the high intertask communication traffic, IPC becomes critical subsystem for microkernels, putting high demands on the speed, latency and reliability of IPC model and implementation. Although theoretically the use of asynchronous messaging system looks promising, it is not often implemented because of a problematic implementation of end user applications. HelenOS implements fully asynchronous messaging system with a special layer providing a user application developer a reasonably synchronous multithreaded environment sufficient to develop complex protocols.

Every message consists of four numeric arguments (32-bit and 64-bit on the corresponding platforms), from which the first one is considered a method number on message receipt and a return value on answer receipt. The received message contains identification of the incoming connection, so that the receiving application can distinguish the messages between different senders. Internally the message contains pointer to the originating task and to the source of the communication channel. If the message is forwarded, the originating task identifies the recipient of the answer, the source channel identifies the connection in case of a hangup response.

Every message must be eventually answered. The system keeps track of all messages, so that it can answer them with appropriate error code should one of the connection parties fail unexpectedly. To limit buffering of the messages in the kernel, every task has a limit on the amount of asynchronous messages it can send simultaneously. If the limit is reached, the kernel refuses to send any other message until some active message is answered.

To facilitate kernel-to-user communication, the IPC subsystem provides notification messages. The applications can subscribe to a notification channel and receive messages directed to this channel. Such messages can be freely sent even from interrupt context as they are primarily destined to deliver IRQ events to userspace device drivers. These messages need not be answered, there is no party that could receive such response.

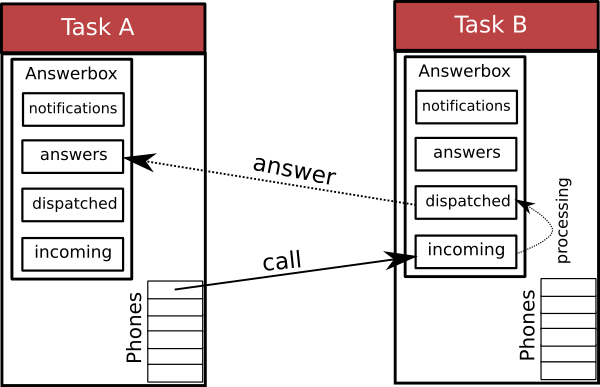

The whole IPC subsystem consists of one-way communication channels. Each task has one associated message queue (answerbox). The task can call other tasks and connect its phones to their answerboxes, send and forward messages through these connections and answer received messages. Every sent message is identified by a unique number, so that the response can be later matched against it. The message is sent over the phone to the target answerbox. The server application periodically checks the answerbox and pulls messages from several queues associated with it. After completing the requested action, the server sends a reply back to the answerbox of the originating task. If a need arises, it is possible to forward a received message through any of the open phones to another task. This mechanism is used e.g. for opening new connections to services via the naming service.

The answerbox contains four different message queues:

Incoming call queue

Dispatched call queue

Answer queue

Notification queue

The communication between task A, that is connected to task B looks as follows: task A sends a message over its phone to the target asnwerbox. The message is saved in task B's incoming call queue. When task B fetches the message for processing, it is automatically moved into the dispatched call queue. After the server decides to answer the message, it is removed from dispatched queue and the result is moved into the answer queue of task A.

The arguments contained in the message are completely arbitrary and decided by the user. The low level part of kernel IPC fills in appropriate error codes if there is an error during communication. It is assured that the applications are correctly notified about communication state. If a program closes the outgoing connection, the target answerbox receives a hangup message. The connection identification is not reused until the hangup message is acknowledged and all other pending messages are answered.

Closing an incoming connection is done by responding to any incoming message with an EHANGUP error code. The connection is then immediately closed. The client connection identification (phone id) is not reused, until the client closes its own side of the connection ("hangs his phone up").

When a task dies (whether voluntarily or by being killed), cleanup process is started.

hangs up all outgoing connections and sends hangup messages to all target answerboxes,

disconnects all incoming connections,

disconnects from all notification channels,

answers all unanswered messages from answerbox queues with appropriate error code and

waits until all outgoing messages are answered and all remaining answerbox queues are empty.

On top of this simple protocol the kernel provides special services closely related to the inter-process communication. A range of method numbers is allocated and protocol is defined for these functions. These messages are interpreted by the kernel layer and appropriate actions are taken depending on the parameters of the message and the answer.

The kernel provides the following services:

creating new outgoing connection,

creating a callback connection,

sending an address space area and

asking for an address space area.

On startup, every task is automatically connected to a

naming service task, which provides a switchboard

functionality. In order to open a new outgoing connection, the client sends a

CONNECT_ME_TO message using any of his phones. If

the recepient of this message answers with an accepting answer, a new

connection is created. In itself, this mechanism would allow only

duplicating existing connection. However, if the message is forwarded,

the new connection is made to the final recipient.

In order for a task to be able to forward a message, it

must have a phone connected to the destination task.

The destination task establishes such connection by sending the CONNECT_TO_ME

message to the forwarding task. A callback connection is opened afterwards.

Every service that wants to receive connections

has to ask the naming service to create the callback connection via this mechanism.

Tasks can share their address space areas using IPC messages. The

two message types - AS_AREA_SEND and AS_AREA_RECV are used for sending and

receiving an address space area respectively. The shared area can be accessed

as soon as the message is acknowledged.

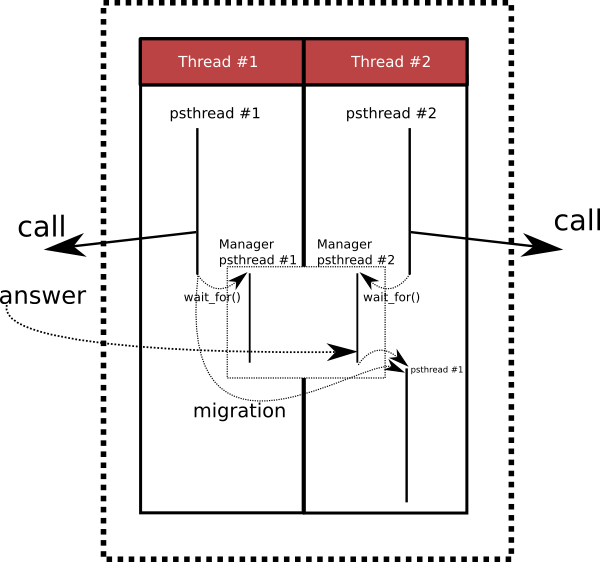

The conventional design of the asynchronous API seems to produce applications with one event loop and several big switch statements. However, by intensive utilization of userspace pseudo threads, it was possible to create an environment that is not necessarily restricted to this type of event-driven programming and allows for more fluent expression of application programs.

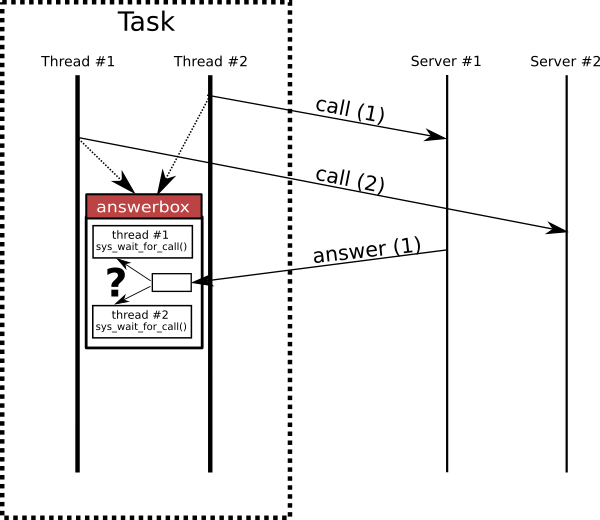

Each task is associated with only one answerbox. If a multithreaded application needs to communicate, it must be not only able to send a message, but it should be able to retrieve the answer as well. If several pseudo threads pull messages from task answerbox, it is a matter of coincidence, which thread receives which message. If a particular thread needs to wait for a message answer, an idle manager pseudo thread is found or a new one is created and control is transfered to this manager thread. The manager threads pop messages from the answerbox and put them into appropriate queues of running threads. If a pseudo thread waiting for a message is not running, the control is transferred to it.

Very similar situation arises when a task decides to send a lot of messages and reaches the kernel limit of asynchronous messages. In such situation, two remedies are available - the userspace library can either cache the message locally and resend the message when some answers arrive, or it can block the thread and let it go on only after the message is finally sent to the kernel layer. With one exception, HelenOS uses the second approach - when the kernel responds that the maximum limit of asynchronous messages was reached, the control is transferred to a manager pseudo thread. The manager thread then handles incoming replies and, when space is available, sends the message to the kernel and resumes the application thread execution.

If a kernel notification is received, the servicing procedure is run in the context of the manager pseudo thread. Although it wouldn't be impossible to allow recursive calling, it could potentially lead to an explosion of manager threads. Thus, the kernel notification procedures are not allowed to wait for a message result, they can only answer messages and send new ones without waiting for their results. If the kernel limit for outgoing messages is reached, the data is automatically cached within the application. This behaviour is enforced automatically and the decision making is hidden from the developer.

Unfortunately, the real world is is never so simple. E.g. if a server handles incoming requests and as a part of its response sends asynchronous messages, it can be easily preempted and another thread may start intervening. This can happen even if the application utilizes only one userspace thread. Classical synchronization using semaphores is not possible as locking on them would block the thread completely so that the answer couldn't be ever processed. The IPC framework allows a developer to specify, that part of the code should not be preempted by any other pseudo thread (except notification handlers) while still being able to queue messages belonging to other pseudo threads and regain control when the answer arrives.

This mechanism works transparently in multithreaded environment, where additional locking mechanism (futexes) should be used. The IPC framework ensures that there will always be enough free userspace threads to handle incoming answers and allow the application to run more pseudo threads inside the usrspace threads without the danger of locking all userspace threads in futexes.

The interface was developed to be as simple to use as possible. Typical applications simply send messages and occasionally wait for an answer and check results. If the number of sent messages is higher than the kernel limit, the flow of application is stopped until some answers arrive. On the other hand, server applications are expected to work in a multithreaded environment.

The server interface requires the developer to specify a

connection_thread function. When new connection is

detected, a new pseudo thread is automatically created and control is

transferred to this function. The code then decides whether to accept

the connection and creates a normal event loop. The userspace IPC

library ensures correct switching between several pseudo threads

within the kernel environment.